Figuring on Biggering

New proposals for 'responsible capability scaling' can't be trusted to deliver an honest assessment of AI risk

I meant no harm I most truly did not,

but I had to grow bigger so bigger I got.

I biggered my factory, I biggered my roads,

I biggered the wagons, I biggered the loads,

of the Thneeds I shipped out I was shipping them forth

from the South, to the East, to the West, to the North.

I went right on biggering … selling more thneeds.

And I biggered my money which everyone needs.

From The Lorax, by Dr. Seuss

It's AI Summit week. Policymakers are gearing up for a diplomatic push at Bletchley Park and the Fringe is in full swing. In advance of the Summit meeting, Government have released various policy documents that give a sense of their approach. It's an approach that is dominated by the interests of the AI industry, which means it downplays a range of issues - surveillance, labour rights, misinformation, greenhouse gas emissions etc - that matter much more than the imaginary risks from magical technologies. (Yesterday's White House Executive Order is a more serious piece of policy work).

Among the UK's proposals to regulate next-generation AI is the idea of 'Responsible Capability Scaling'.

Rachel Coldicutt from Careful Industries had an excellent Twitter thread on this. Her assessment is generous. She concludes that it's a good start. Proposals to think about risks proactively should be encouraged when the tendency is to wait-and-see. But she is right to point out that the people building AI are not the best-placed to assess its risks. They may even be the worst-placed. All the incentives point towards postponing proper risk assessment. Small groups of people who are at risk from technologies may be easy to ignore. (This report contains some brilliant case studies of technologies whose risks were overlooked, sometimes for decades. Early warnings of the risks from asbestos first emerged at the end of the 19th Century. It took a century to ban it).

It's good to see some recognition that size matters. Some AI developers argue that it is all that matters. The most enthusiastic among them claim that the magical properties of large language properties come from their largeness and that problems of bias, hallucination etc will be solved by growing them even larger. To recall Robert Mercer's favourite phrase, 'there's no data like more data'. Companies recognise that more power might demand more responsibility, which is why they have started publishing policies on scaling.

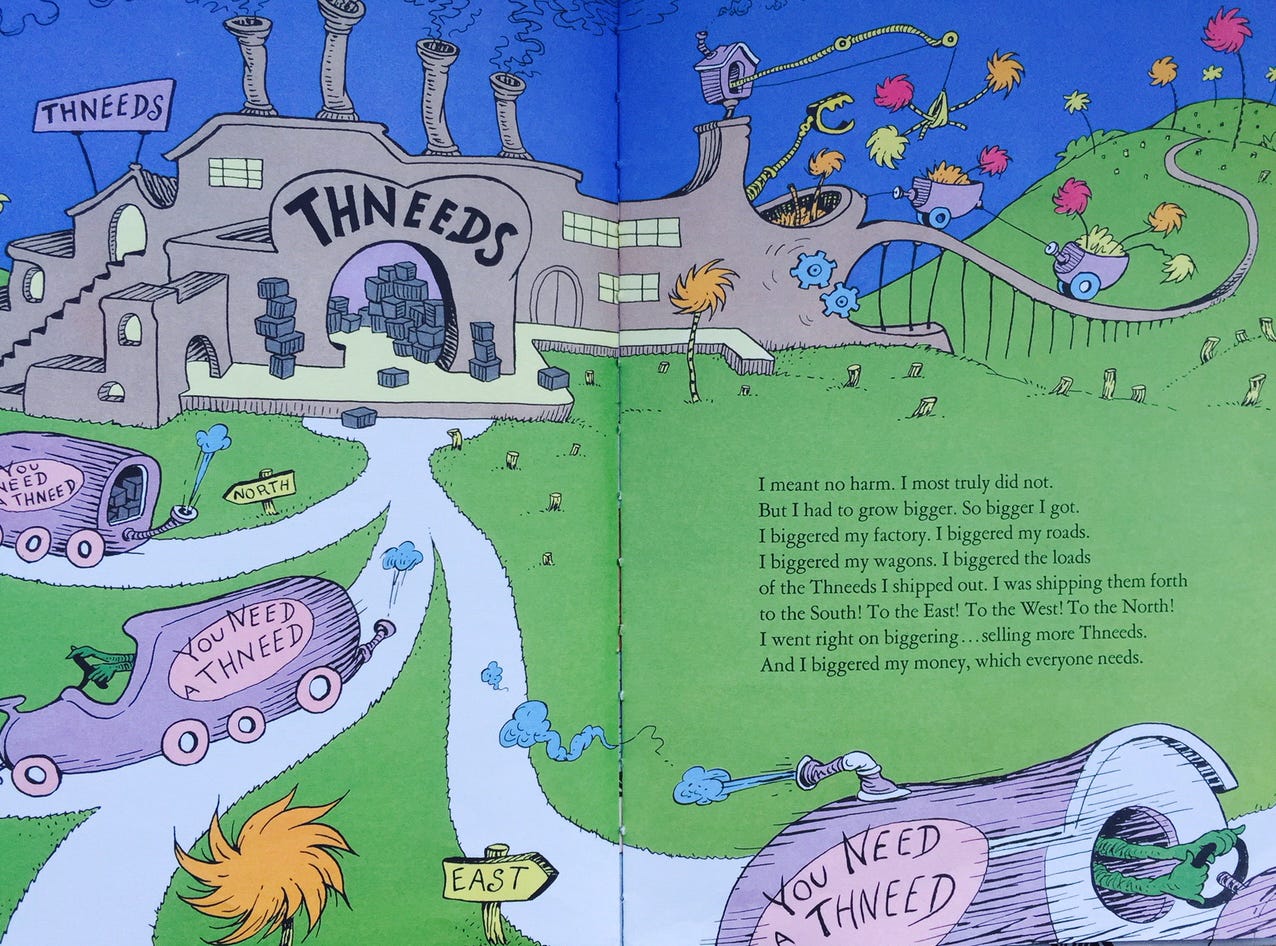

However, this is another lesson in why companies' framing of issues can’t just be imported into policy. As Rachel Coldicutt points out, the risks that come from scaling up technologies are not purely technical; they are sociotechnical. The risks of Facebook when it was just a creepy app in a Harvard dorm room were very different from those that emerged when it had billions of users. A car on a street may present safety risks to those in its vicinity, but thousands of cars clogging up, polluting and eventually forcing the redesign of cities pose a very different challenge. The story of the Lorax, Dr Seuss's parable of irresponsible innovation, begins with the felling of just one Truffula tree. But then the entrepreneurial Onceler admits he is 'figuring on biggering'. The world gets locked into the production of Thneeds, the biggering continues and eventually the environment is ruined.

OpenAI made much of ChatGPT being the fastest growing app in history. Speed and scale matter. In a recent paper, a few of us argued that scale-for-scale's sake has become a new norm of innovation. Led by gurus like Peter Thiel, scaling is now a deliberate strategy to misdirect regulators and consumers in the search for monopoly rents. By the time consequences are realised, companies and their creations may have become too big to fail. Antitrust regulators like the US Federal Trade Commission and the British Competition and Markets Authority are only now waking up to the problems of scale. The trouble with Big Tech might lie more with the 'Big' than the 'Tech'.

If Government are going to act on fast-moving AI, they can't trust companies to scale responsibly by themselves. The proposed AI Safety Institute is an opportunity to properly assess the risks, but it will need to include a much wider range of perspectives.

From The Lorax TV special, 1972